- Published on

The Data Bottleneck: Why AGIBOT is Open-Sourcing its Real-World Training Library

This article is part of AGIBOT AI Week — a collaboration between Humanoids Daily and AGIBOT.

For years, the primary hurdle in the race toward General Artificial Intelligence (AGI) has been the physical data bottleneck. While large language models can ingest the entirety of the internet to learn human syntax, physical AI—the intelligence that governs physical robots—has lacked a similar, high-fidelity library of the physical world. Most robotics data is generated in sanitized laboratory settings or through repetitive, scripted motions that fail to prepare a machine for the unpredictability of a crowded commercial space or a cluttered home.

Today, as part of its inaugural AI Week, AGIBOT announced a significant step toward solving this infrastructure deficit. The company has released AGIBOT WORLD 2026, a heterogeneous, open-source dataset designed to systematically support the five core research pathways of embodied intelligence. By moving beyond controlled demonstrations and providing a robust foundation of real-world interactions, AGIBOT is positioning itself not just as a hardware manufacturer, but as a primary data architect for the robotics industry.

Beyond the Script: The Free-Form Strategy

The core differentiator of AGIBOT WORLD 2026 lies in its collection methodology. Traditional datasets often rely on rigid, repetitive demonstrations that limit a robot’s ability to generalize. AGIBOT has instead implemented a "free-form" data collection strategy.

In this model, teleoperators perform tasks dynamically based on real-time conditions rather than following a pre-set script. This approach introduces a level of environmental variability and task complexity that is often missing from academic datasets, significantly improving the robot's ability to generalize across different object categories and initial configurations. To further bridge the gap between digital training and physical execution, AGIBOT is releasing 1:1 digital twin simulation data alongside every real-world episode.

Closing the Loop Between Hardware and Intelligence

Data is only as useful as the hardware that captures it. The AGIBOT WORLD 2026 dataset is gathered using the company’s G2 hardware platform, a system built for high-performance joint actuation and multi-modal sensing.

To ensure the data reflects how a robot operates as a unified system, AGIBOT integrates several technical innovations:

- Whole-Body Control (WBC): This enables the coordinated movement of arms, waist, and hands, allowing for fluid, integrated motions rather than isolated mechanical steps.

- Force-Controlled Collection: Beyond simple motion trajectories, the system captures physical interactions, including contact dynamics and force feedback.

- Multi-Modal Integration: The pipeline synchronizes RGB(D) video, tactile signals, LiDAR point clouds, and full-body joint states into a unified stream.

By capturing these "physical priors," the dataset provides a more accurate representation of the complexities involved in real-world robot behavior.

An Industrial Pipeline for Imitation Learning

The release of AGIBOT WORLD 2026 will occur in five distinct phases, with Phase 1 focusing specifically on imitation learning. This initial release includes hundreds of hours of data primarily focused on commercial and service environments.

What makes this release particularly valuable to researchers is the hierarchical annotation framework. Each episode is paired with:

- Task-level descriptions and step-by-step action sequences.

- Atomic skill labels (such as "pull" or "place") and object attributes like name and color.

- Error-recovery trajectories, which are retained and annotated to help models learn how to correct course when a task fails.

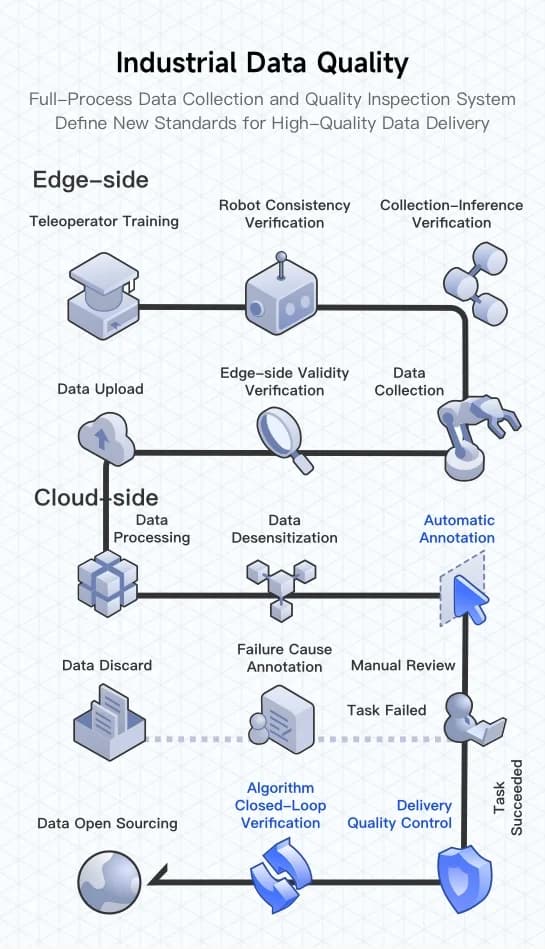

Each data episode undergoes a rigorous "industrial-grade" cleaning process, moving from edge-side validity verification to cloud-side automatic annotation and manual review. This ensures that the data is not just voluminous, but "training-ready" for large-scale model development.

Democratizing Embodied AI

AGIBOT’s decision to open-source this data reflects a broader shift in the company’s philosophy. While many startups guard their proprietary data as a competitive moat, AGIBOT is betting on an infrastructure-driven approach to accelerate the entire ecosystem.

"High-quality data is foundational to unlocking the next generation of robotic capabilities," the company noted in its release. By providing the community with a "million-scale" real-world dataset, AGIBOT aims to transition embodied AI from the isolation of the research lab into the complexity of the real world.

Researchers and developers can access the full dataset and documentation at agibot-world.com.

Comments

No comments yet. Be the first to share your thoughts!

Share this article

Stay Ahead in Humanoid Robotics

Get the latest developments, breakthroughs, and insights in humanoid robotics — delivered straight to your inbox.